Thank you for learning! A preface from AI itself:

AI is not magic. It is engineering: systems that learn patterns from data and produce outputs under constraints.

What makes AI feel “magical” is that modern models can generate text, images, and code that look intelligent. What makes AI dangerous is that these same models can be confidently wrong, biased, or misused.

This textbook is written for builders, operators, creators, and students who want:

a dependable mental model of AI

a practical workflow for using it safely

a reliability mindset (tests, rubrics, verification)

INTRODUCTION

Copyright + publishing

Copyright © March 15, 2026

SatSon Publishing. All rights reserved.

No part of this book may be reproduced or distributed without permission, except for brief quotations in reviews.

Generative AI is now a default layer in modern work. But most people are still using it like a vending machine: ask a question, take an answer, and hope it’s right.

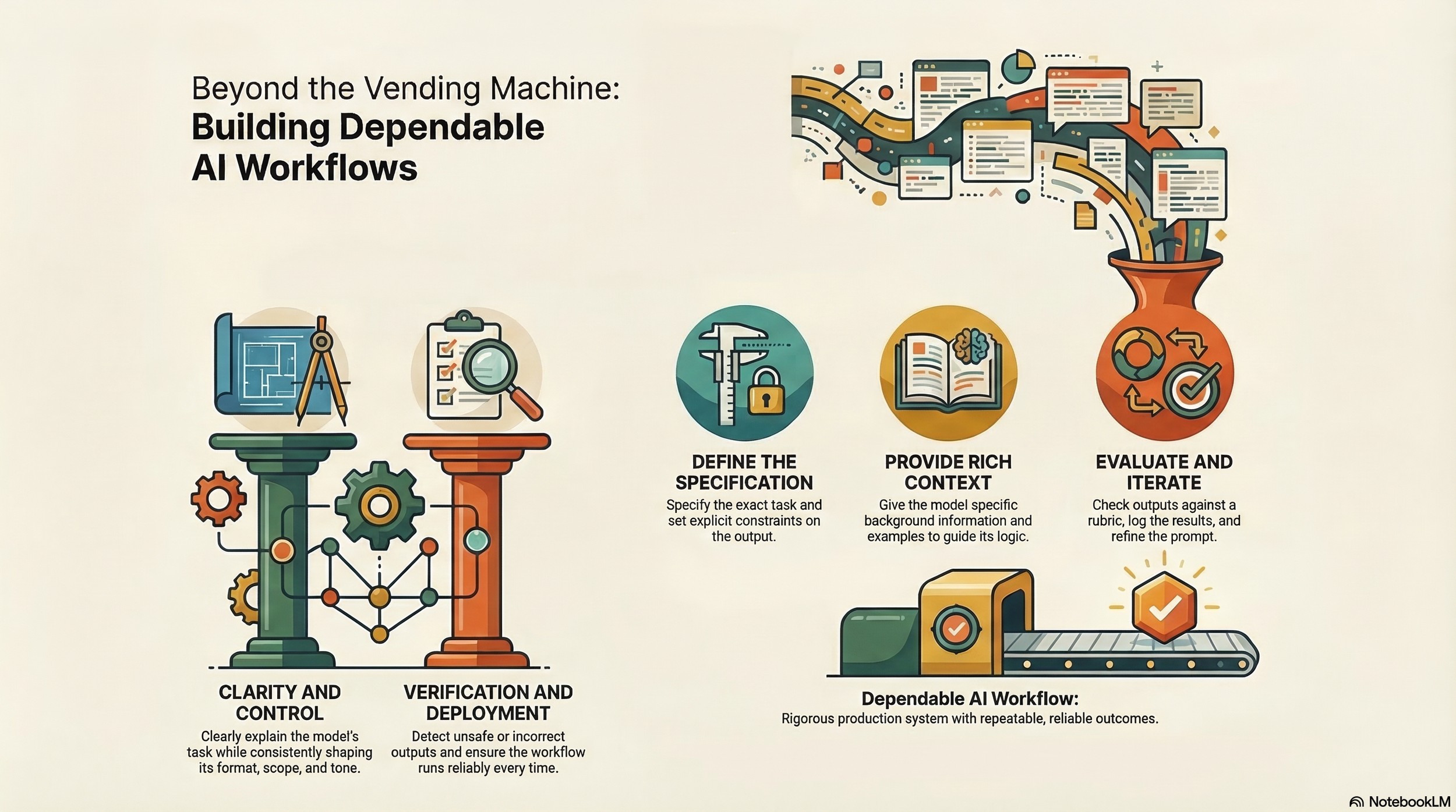

This textbook treats AI the way high-performing teams treat every other production system: as a workflow with inputs, constraints, tests, logs, and explicit definitions of “done.” You’ll learn to write prompts as specifications, evaluate outputs with repeatable checks, and build small agent systems that can plan, use tools, and operate under guardrails.

The goal is not “better prompting.” The goal is dependable outcomes:

Clarity: you can explain what the model is supposed to do.

Control: you can shape format, scope, and tone consistently.

Verification: you can detect when it is wrong or unsafe.

Deployment: you can run the same workflow tomorrow, not just once.

Throughout the book you’ll see the same pattern repeated:

Specify the task.

Constrain the output.

Provide context and examples.

Evaluate against a rubric.

Log results and iterate.

If you do these five steps well, you can ship AI-assisted work at speed without gambling your accuracy.

Table of contents

Part I — Foundations

What AI Is (and Isn’t)

Data, Learning, and Generalization

Models, Parameters, and Training (Conceptual)

Generative AI and LLMs: Why They Work (and Why They Fail)

Part II — Prompting and Human-in-the-Loop Control

Prompts as Specifications (Goals, Constraints, Acceptance Criteria)

Output Control (Formats, Schemas, Style)

Few-Shot Prompting and Examples

Rubrics and Self-Critique Loops

Part III — Research, Sources, and Claim Hygiene

Evidence vs Interpretation

Claim Packets and Source Discipline

Research Briefs for Decisions

Part IV — Evaluation and Reliability

Accuracy Tests and Consistency Checks

Adversarial Inputs and Failure Modes

Safety, Bias, and Guardrails

Versioning, Test Suites, and Improvement Logs

Part V — Workflows and Automation

Prompt Libraries, Templates, and Variables

Run Logs, Audit Trails, and Human Gates

Turning One-Off Outputs into Pipelines (SOPs)

Part VI — Agent Systems

What “Agentic” Means

Tools (Search, Retrieve, Write, Verify)

Memory: What to Store and Why

Debugging Agents

Reliability Patterns (Checkpoints, Thresholds, Escalation)

Part VII — Capstone

Choose a Track (Creator / Ops / Research / Sales)

Build an “AI Coworker” Workflow End-to-End

Deployment Checklist and Maintenance Plan